Won 69% of the contests

"If you don't pay for it, you are the product". Facebook creates detailed profiles on both its users and on people who don't have an account. It's a dream come true for any spy agency: the world's biggest people database, where people report on themselves and on each other. Their algorithmic newsfeed has a huge influence on how its users see the world, allowing companies and governments to manipulate their perception, and even their mood.

Won 68% of the contests

This company works for landlords. Renters are "...required to grant it full access to your Facebook, LinkedIn, Twitter and/or Instagram profiles. From there, Tenant Assured scrapes your site activity, including entire conversation threads and private messages; runs it through natural language processing and other analytic software; and finally, spits out a report that catalogs everything from your personality to your 'financial stress level'."

Won 68% of the contests

This company creates detailed psychological profiles from your data, and then uses that to influence elections. They know what messages you will be most susceptible to. They worked on the Trump campagne.

Won 68% of the contests

You get a better deal on insurance as long as you and your friends don't make any claims. This increases social pressure to not make claims, which could lead to bad situations. Secondly, a core idea behind insurance is solidarity with those less fortunate. Segmenting the market could mean higher premiums for the poor.

Won 68% of the contests

This company proposes to store highly sensitive information on the blockchain - a technology that has no way to delete data on it, and works by spreading your data across thousands of computers.

Won 68% of the contests

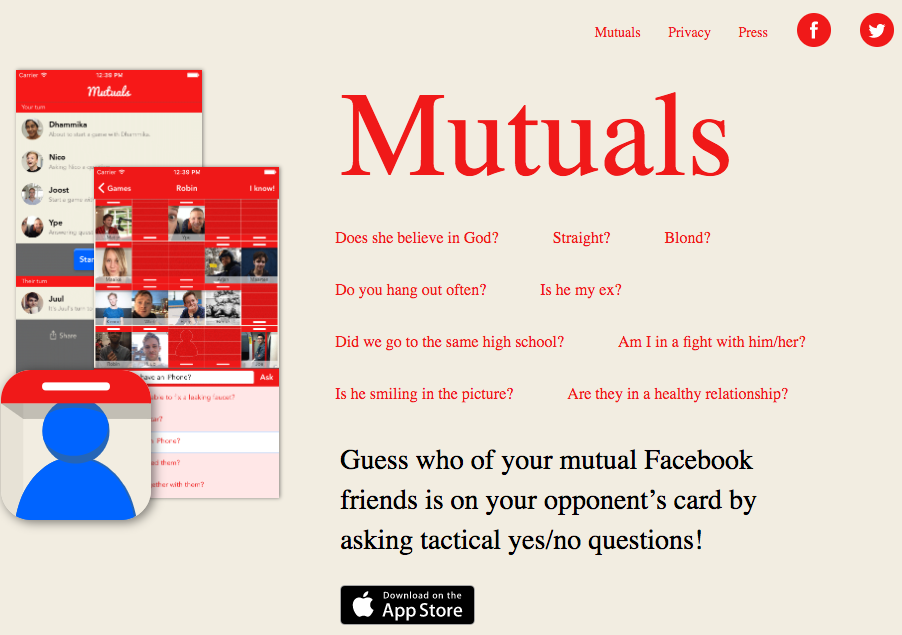

Remember the classic board game "Guess who"? This is a Facebook version where they propose that you answer disturbing questions about mutual friends, like "are they in a healthy relationship" or "are they gay".

Won 67% of the contests

Their algorithms look at what you've posted online and deduce your psychological profile.

Won 67% of the contests

Did you know Samsung also makes automated sentry guns? These guns are used at the Korean border, and can kill people autonomously. Photo by MarkBlackUltor

Won 67% of the contests

Find anyone on the popular Russian social network VKontakte, simply by taking a picture of their face. Already the app has lead to problems with stalking and vigilantism. The city of Moscow wants to integrate it into all of their security cameras.

Won 67% of the contests

A credit scorer and databroker that has not only created intimate profiles on people, but had such poor security that in 2017 their databases were hacked. Sensitive data on millions of Americans is now out in the open.

Won 67% of the contests

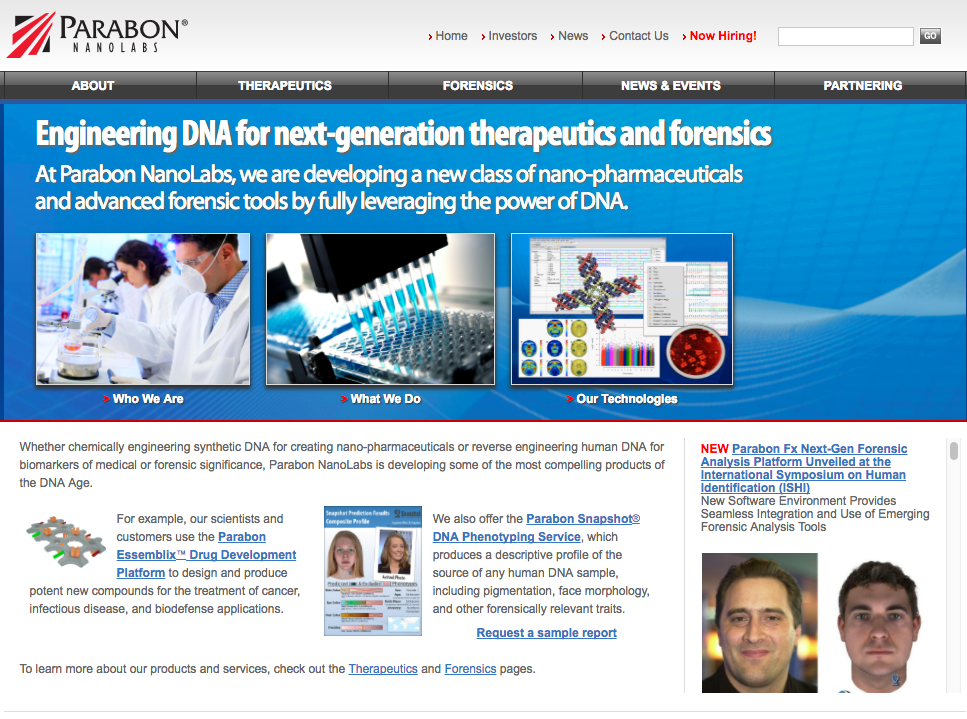

This company claims to do DNA Phenotyping - recreating what someone's face looks like from just their DNA. The question: could you convict someone of a crime based on this technology?

Won 67% of the contests

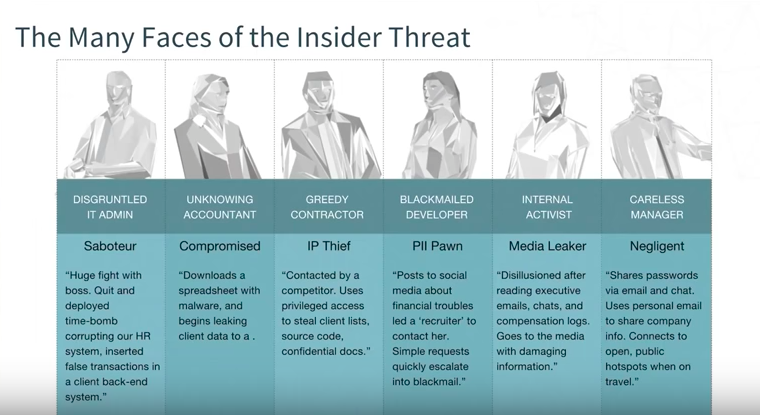

A lot of new security companies focus on 'insider threats'. RedOwl takes data from employees, including their emails and social media feed, and generates a list of the ones you should keep an eye on.. including those that might leak to the press.

Won 67% of the contests

Find out if your child has the genes it takes to become a top soccer player. The data they gather about your DNA could end up in a lot of places, and severely affect their future chances in, for example, the job market.

Won 67% of the contests

A search engine that is popular with private investigators. It allows you to find "Criminal Records, Arrests, Legal Docs, Marriages, Divorces, Court Records, Contact Info, And Much More!"

Won 66% of the contests

An Italian company that was caught selling surveillance systems to repressive governments. These IMSI Catchers (fake mobile towers) can actively surveil and track cell phone users.

Won 66% of the contests

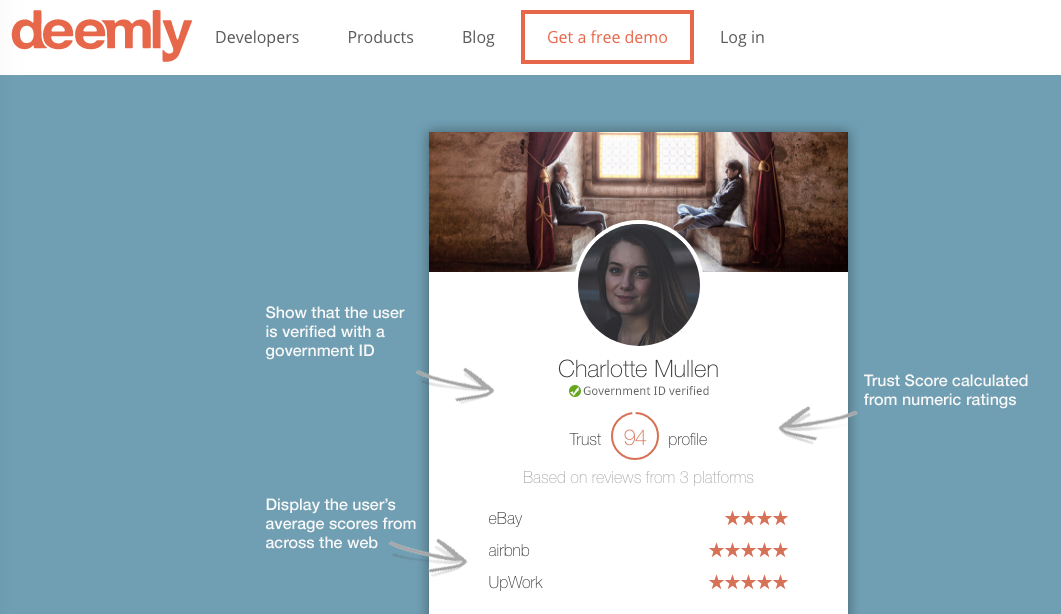

This company aggregates your reputation from various online sources to create one single score. Just like the Chinese government is doing. These reputation systems amplify social pressure, leading to an increase in censorship and risk avoidance.

Won 66% of the contests

Cloudpets are internet-connected cuddly toys that use sensors and microphones to allow children to interact with them. It was discovered that Cloudpets data was stored in an unsecured database that was publicly accessible, and that was even turning up in search engines.

Won 66% of the contests

This face recognition company's software is used in public spaces like stadiums, and matches 20 million faces per second. It can track people as they move from store to store, and alert security personnel.

Won 66% of the contests

This CIA-backed multi-billion dollar company that offers military grade data-analysis, surveillance and prediction to governments, police, cities and companies. The are considered to be as powerful as Google, but the creepy thing is: you're likely never heard of them.

Won 66% of the contests

The list of Uber's unethical practices is long: showing fake data to regulators, requesting and then cancelling rides at their competitor Lyft, rampant sexual harassment, repeatedle ignoring local laws, claiming their workers are contractors, and generally contributing the the rise of the 'precariat': people who make little money and have very little job security (a situation that breeds extremist thought).

This is a ranking of the most creepy companies and startups that collect your data or use algorithms in a dubious way.